Introduction

Since its inception, artificial intelligence (AI) has continuously evolved, leading to remarkable advancements in natural language processing (NLP). Among the most noteworthy innovations in this field is the Generative Pre-trained Transformer 3 (GPT-3), developed by OpenAI. Released in June 2020, GPT-3 has garnered significant attention due to its unprecedented capabilities in generating human-like text based on textual input. This report aims to explore the fundamental aspects of GPT-3, including its architecture, training methodology, potential applications, and associated ethical considerations.

Background: The Evolution of GPT Models

The journey to GPT-3 began with the introduction of the original Generative Pre-trained Transformer in 2018. GPT-1 laid the groundwork for unsupervised learning in NLP, employing a transformer-based architecture to generate coherent text. The subsequent release, GPT-2, expanded on these concepts with 1.5 billion parameters, allowing it to perform various language tasks with impressive proficiency. Building on the successes of its predecessors, OpenAI launched GPT-3, which features a staggering 175 billion parameters, making it the largest language model of its time.

Architecture and Technical Specifications

GPT-3 is rooted in the transformer architecture, which revolutionized the field of NLP through its attention mechanisms. Unlike traditional recurrent neural networks (RNNs), transformers can process entire sequences of data simultaneously, enabling more efficient training and contextual understanding.

Key Features of the Transformer Architecture:

- Attention Mechanism: This component allows GPT-3 to weigh the importance of different words in a sentence, facilitating better context comprehension.

- Scalability: The architecture is highly scalable, allowing for the increase of model size by simply stacking more layers and attention heads.

- Masked Language Modeling: In pre-training, GPT-3 learns by predicting the next word in a sentence given the previous words, enhancing its ability to generate coherent text.

GPT-3's immense parameter count necessitated vast computational resources for its training. OpenAI utilized a diverse range of internet text to train the model, allowing it to learn from a wide variety of writing styles, topics, and contexts. The model was trained without supervision, meaning it was not explicitly told what to learn, enabling it to develop a broad understanding of human language.

Performance and Capabilities

One of the most compelling aspects of GPT-3 is its versatility. The model has shown remarkable proficiency in multiple NLP tasks, such as:

- Text generation (this contact form): GPT-3 can generate cohesive and contextually relevant text based on a prompt, making it valuable for content creation, storytelling, and creative writing.

- Language Translation: The model can perform translation tasks across various languages, showcasing its understanding of linguistic nuances.

- Question Answering: GPT-3 can provide insightful answers to general knowledge questions, offering a glimpse into the model's capability to understand and process information.

- Chatbots and Conversational Agents: The model's ability to simulate human-like dialogue has led to the development of advanced chatbots that provide customer service, tutoring, and companionship.

- Code Generation: GPT-3 has also demonstrated the ability to generate code snippets based on natural language instructions, thus serving as a useful tool for developers.

The performance of GPT-3 is notable not just for its breadth but also for its depth. In many cases, the outputs produced by GPT-3 are indistinguishable from human-written text, leading to a growing interest in its applications across various fields.

Applications of GPT-3

The versatility of GPT-3 has led to a wide array of applications across different industries:

- Content Creation: Journalists, marketers, and bloggers are leveraging GPT-3 to generate articles, ad copies, and social media posts, enhancing productivity and creativity.

- Education: Educators are using GPT-3 for tutoring, generating quizzes, and providing explanations for complex topics, making personalized learning more accessible.

- Healthcare: GPT-3's capabilities can assist healthcare professionals by generating patient reports, summarizing medical literature, and enhancing telemedicine interactions.

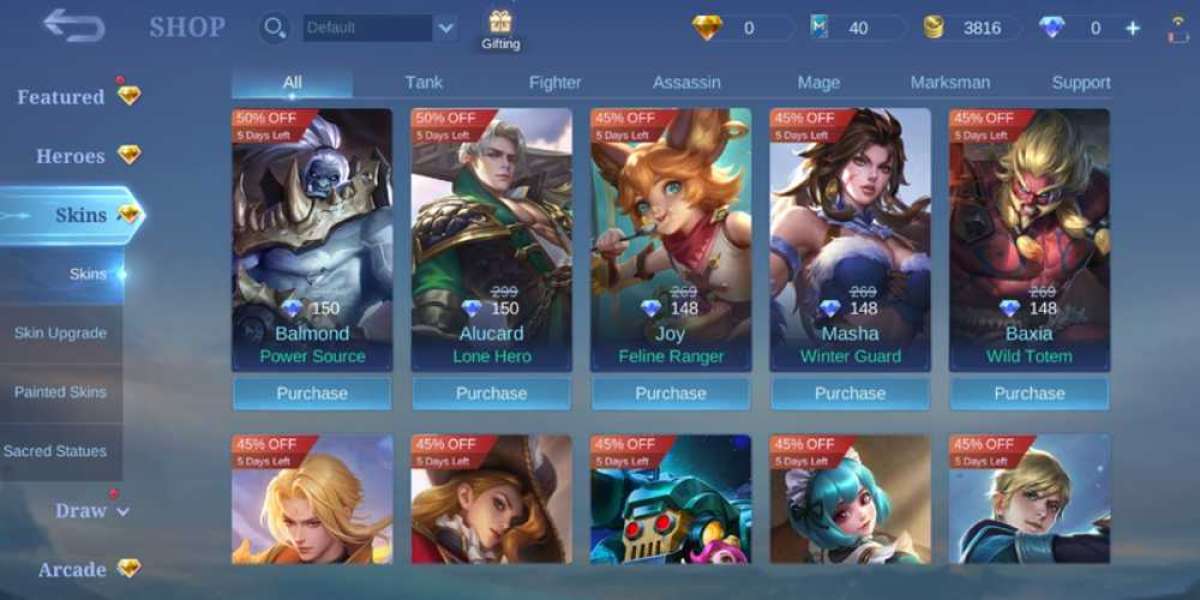

- Entertainment: The gaming industry is exploring the use of GPT-3 for creating dynamic narratives and engaging non-player characters (NPCs) capable of interactive storytelling.

- Software Development: Codex, derived from GPT-3, is specifically designed to assist programmers by generating code and providing suggestions based on natural language descriptions of functionality.

Ethical Considerations and Challenges

Despite its remarkable capabilities, the deployment of GPT-3 raises several ethical concerns and challenges:

- Misinformation: Given its ability to generate coherent text, GPT-3 can be used to create and spread misinformation. The potential for generating fake news, hoaxes, or misleading information poses a significant risk.

- Bias: Like many AI models, GPT-3 can inherit biases present in the data it was trained on. This bias can lead to the propagation of stereotypes and discriminatory content, highlighting the necessity for responsible AI deployment.

- Environmental Impact: The immense computational power required to train models like GPT-3 raises concerns about energy consumption and environmental sustainability.

- Job Displacement: The automation of tasks traditionally performed by humans, such as writing and coding, may lead to job displacement in certain sectors, creating economic and social challenges.

- Intellectual Property: The question of ownership arises with GPT-3-generated content. Who holds the rights to the text produced by the AI? This ambiguity poses challenges for legal frameworks surrounding intellectual property.

Future Directions

The development of GPT-3 marks a significant milestone in the field of AI and NLP, but it also highlights the need for continuous advancements and responsible practices. Future directions for research and applications may include:

- Fine-tuning for Specific Domains: While GPT-3 is a generalist model, future iterations may focus on specialized data to improve performance in specific industries, such as law or medicine.

- Improving Robustness and Controllability: Ongoing efforts aim to make AI models more controllable, allowing users to influence the style and tone of generated text while mitigating biases.

- Collaborative AI: The growth of human-AI collaboration is a promising area, where AI can augment human creativity and decision-making rather than replace it.

- Ethical Guidelines and Regulation: As the use of models like GPT-3 increases, establishing ethical guidelines and regulatory frameworks will be essential to address potential risks and promote responsible AI use.

Conclusion

GPT-3 represents a landmark achievement in the field of artificial intelligence, showcasing the impressive capabilities of modern language models in generating human-like text across various applications. While its potential is vast, the ethical implications and challenges associated with its use necessitate careful consideration. As we navigate the evolving landscape of AI, fostering responsible practices and continuing to refine these technologies will be essential for ensuring that they serve humanity’s best interests. The dialogue surrounding AI, ethics, and innovation will be crucial as we look towards the future of intelligent systems.